💻 Globally consistent state with Cloudflare Workers and Fauna

Presented by: Kristian Freeman, Rob Sutter

Originally aired on November 5, 2023 @ 9:30 AM - 10:00 AM EST

In this Full Stack Week session, join us and Fauna's Head of Developer Advocacy, Rob Sutter, to learn how to manage your application state with strong consistency while still delivering the low latency your users demand.

Visit the Full Stack Week Hub for every exciting announcement and CFTV episode — and check back all week for more!

Handy Links:

English

Full Stack Week

Transcript (Beta)

Welcome to another edition of our Developer Speaker Series. I'm Kristian Freeman.

I manage our Developer Advocacy team here at Cloudflare. And I'm here with Rob Sutter from Fauna.

How you doing, Rob? Hey, Kristian. I'm doing great. Thanks. Thank you for having me here today.

So Rob is the Head of Developer Advocacy at Fauna.

And I'm super excited to have you on Cloudflare TV because I can say from experience in our workers and increasingly pages community, we have a lot of people who use Fauna.

And we're always trying to tell more of that story around data and how to use some of the really compelling things like Fauna, sort of like the next step in managing data for your applications.

We want to tell that story with workers and pages.

So I think this is a perfect fit. And today you're going to be talking about basically that, right?

Like integrating Fauna with workers and pages.

You want to tell us a little bit about what we're going to be learning today?

Yeah, that's exactly right. And that's good to hear the intro as well, because increasingly, we reach for Cloudflare in particular workers and pages, like you said, as a default when we're looking for compute.

So it's a really great pairing.

The thing that we're going to be talking about today is globally consistent state.

And that's really important to applications in a lot of fields and a lot of use cases where you can't tolerate eventual consistency.

It's hard.

It's a very hard problem that Fauna solves. Our CTO and co-founder Evan likes to say that we have a technical marketing problem, because a lot of people don't believe that we can do what we do.

And you might see some of that today as we're going through it, because people are just used to pre-Fauna models, right?

But the idea of a distributed database that offers strongly consistent transactions and is fast, which is critical when you're using something like workers, is really hard to wrap your head around.

So we're going to go through some of that. And of course, we won't give away the secret sauce, but we'll point you to some resources for some getting started tutorials.

We have a quick start that we published with Cloudflare for more advanced production-ready applications.

If we have some time, we'll actually build on that, dig around a little bit.

And we'll just see how, if you have those models or those use cases, this is really something that can't be matched out there for getting your state or your data in a strongly consistent way, while still offering performance to your users.

I love that. Yeah. And I'll say, we have a pretty short amount of time, so we're going to try and jump right into Rob's presentation.

But if you have questions, you can send us an email, livestudio at Cloudflare.tv.

That'll show up here on my end. I have all the fancy tooling back here to see questions.

And we'll make sure that Rob and I answer that. And it looks like you also have a link down here, fauna .link slash workers, which will be like all the resources for this talk.

So lots of things to go check out. But yeah, without further ado, maybe let's just jump into it.

Yeah, let's get into it. That link will take you directly to the tutorial.

I do just want to clarify that. The tutorial in the Cloudflare Workers docs.

At the end of that tutorial, you'll get a link to the quick start.

So if you're already familiar with workers and fauna, go ahead and scroll to the bottom of that page to go to the quick start.

If not, it's the best place to get started.

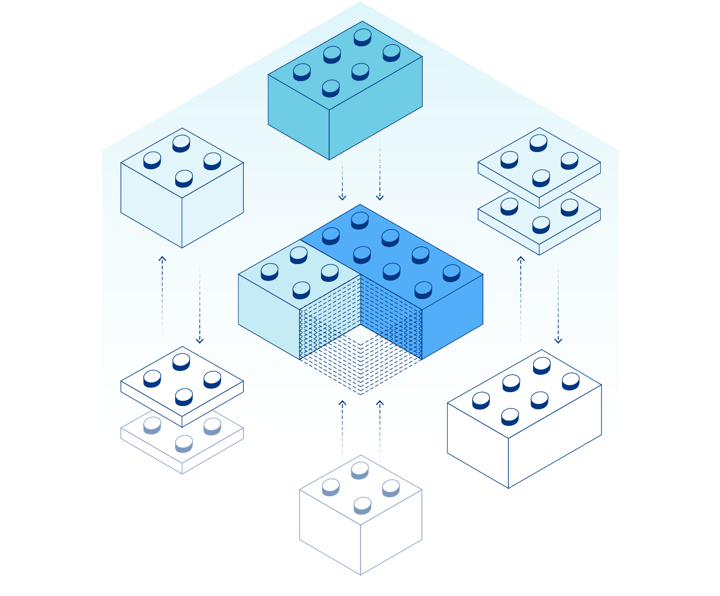

Awesome. All right. So yeah, as we were talking about, one of the advantages for using fauna with workers is that it is everywhere.

And if you caught Edwin's talk yesterday, he was talking about different models of data where workers KB is in every pop, and that's 250 pops or plus.

I think I've got the numbers right around the world today.

And strongly consistent data at that scale is almost possible to achieve.

So there's a series of trade-offs that you're going to work through here.

Fauna is not at all 250 pops, and that's not the goal.

Fauna is sort of set back a little further, but not all the way into a single region.

So let's start from this slide, and then we'll work into where fauna is.

This is so great. Yeah, I saw you tweet about this yesterday. Yeah, you have to embrace this, right?

And it is about simplicity on the far side of complexity, right?

That's the most valuable thing you can have. Simplicity before complexity, like I have a single database instance running in a cluster on my desk.

That's simple, but that won't scale, especially if your users are distributed, right?

And then in between, there's this path that we've all had to walk over the past five to 10 years of distributed systems and replicating things across regions and trying to coordinate things asynchronously.

And how do we take eventual consistency into account when you go to scale out horizontally?

And all of these things that were just exceptionally hard.

And a lot of companies did a lot of great stuff to simplify that, but it required you to come along for a distributed systems ride.

And today, you really can get back to with workers, Wrangler, Worktop, fauna, you can get back to everything is in one repo.

You test it locally, you push it through your CICD pipeline through stages, and you can think of it like a monolith because of what fauna is doing underneath.

None of that stuff in the database.

And when you treat your state synchronously, you get simplicity in your model, right?

So we'll use this as our exploration of how data works together, right?

It's an endpoint for us. When you hit a fauna database, you hit db.fauna.com for our global region.

And there are a couple others in region groups if you intentionally limit it for data residency requirements or performance reasons, right?

But if you just use fauna from the beginning, then you would be hitting db .fauna.com, and your data would be replicated across the world.

And that's what you see represented here, right?

And the way that we had been pre-workers telling people to work with this is sort of in this client serverless model, where your end user application, whether it's an IoT endpoint or web or mobile app, just calls that driver directly, right?

And there are a couple things that you don't get with that, that you get with workers.

You don't get all the protection of being on the Cloudflare network.

If you have an event, let's call it, like an attack, that attack is going against your database.

The database call is by its nature going to be more expensive in dollar terms than a worker call, right?

Because there's more going on there.

So it's a more expensive mistake, or a more expensive event if you're hitting it directly.

Still viable for some use cases, but really as you grow, you want something like workers in front of it as the API.

And this isn't a linear talk.

This is, here's a point, here's a point, here's a point, and then we're going to pull all that together to show you, to support our meme that we started with.

But these are systems metrics, because we talked about being at the edge, speed is important.

And I think it's always amazing to me, a lot of people have made a lot of noise around single millisecond reads in single regions and how important that is from a performance perspective.

And that's true. But every time I go to our status page, I wind up seeing single millisecond reads when you're in one of these region groups.

And that's really astonishing when you combine it with the top number.

If you want to see this for yourself, go to status.fano.com. It's going to be updated in real time.

If we have an event, you're going to see it there.

We're doing this in the open. But a 45 millisecond write, and that write is transactional, it's distributed, and it's strongly consistent.

So the very next read, no matter where that read comes from, is getting the right data.

And this massively simplifies your application.

If you think about multi-phase locking and commit protocols, how we did things in the bad old days with read replicas and stuff like that, the amount of time that it takes to get those locks, to get the data propagated, and to have it available, your app orders a magnitude longer than this.

And that's not a good user experience.

If you've got workers completing their execution in three milliseconds, five milliseconds, a single millisecond, and you tack 600 milliseconds onto that, that's going to be a problem for you.

I would say reads are comparatively easy.

It's like writes, or it's like, okay, this is the part that gets tricky.

So this kind of latency on writes is really impressive, for sure.

Yeah, because that's the hard part. Yeah, it is. And I mean, if you're way into databases and the theory behind this, this all came out of a 2012 paper at Yale.

Calvin was the name of the paper. We've got a link to that on the last slide for resources, but you can just go to fauna.link slash Calvin and read the original paper for yourself.

If you're not that into it, read the first page. It'll give you an overview.

And it's pretty interesting, like if you're going to implement something with Fauna, so that you get that confidence of, okay, this is actually real, right?

But the remaining 11 pages are for the truly committed amongst us.

Unintended, commit, transaction, was that pun intended or no? Yeah. You know what?

I would love to claim that. I didn't even pick up on it. So that one's yours. Point Christian.

Yeah. So that's the performance aspect of Fauna, right? And we talked about moving from that client serverless model to sort of a next-gen serverless model that's enabled by Cloudflare Workers.

And that moves from like a classic serverless model where you have a single region of compute interfacing with a single region of data to this more distributed compute everywhere.

And it treats the data as one single source, but in reality, underneath that, the data is distributed as well.

And so everything becomes where my data science people at, K-means clustering, so that each pop is hitting the closest read or database endpoint based on latency.

And all of this is just happening magically in your application.

All you're writing is, here's how I want you to handle compute. And here's how I want to store and retrieve state, right?

So you're thinking of everything with serverless functions.

Everything's written in one place. You can manage the structure of your database.

All of this from inside a single repo while running a globally distributed API with single-digit millisecond reads, double -digit millisecond writes.

So for me, pretty incredibly powerful stuff, right? And at that point, that brings us back full circle to this idea that, well, at 40 milliseconds, you can afford to block, write, and be done, right?

Depending on your use case.

In some use cases, you may want to use caching and everything varies, right?

But 40 milliseconds is very different from 600 milliseconds for a distributed write.

So, you know, the battle days, we just wrote it to the database and used it as our integration point.

And then we went through this path where the, that's a reasonable approach again for really high workloads.

It's just write it to the database synchronously and get it out whenever you need it and have the confidence that that transaction committed it.

So I think I've hammered that point enough, right?

Have I mentioned that yet? Have I said it? I think that's great.

I mean, that's something, it's a really, like that story, I think fits really well with the way that we talk about workers, which is like, you know, you mentioned like as being a sort of, like imagine you're a full stack engineer and like now suddenly, I don't know, a couple of years ago, you need to also be like a database and like, uh, like ops expert to like, actually like coordinate deploying all of these, maybe like read replicas or like multiple databases across the world or whatever, like that, that like we, you want to do that from like a sort of performance aspect or whatever, but you don't want to like actually do that, you know, like hands on the keyboard, like, okay, I'm deploying all of these databases all over the world.

So again, I think yeah, this meme is amazing. I'm going to save this and cherish it.

This is like, because it reminds me of like, uh, basically like we could do one of these for workers too, right?

Where it's like, just, just write code or like just deploy the function or whatever.

Um, and like in the middle here, there's this thing is like, Oh, like I need to deploy this function, like, and load balance it and like do all this stuff or whatever.

But then like the sort of galaxy brain high IQ thing for workers is like, no, like you just deploy it.

And like, we, we have this thing here, this idea of like the network is the computer or whatever, which is like a point we kind of reiterate all the time where it's like, yeah, like the network is going to take care of like all of that stuff for you, right?

Like you don't need to be an expert in that stuff. I'm like, I'm not an expert in that stuff.

Like there's a lot of people here who are, I'm happy to let them make those decisions for me.

I'm just going to write a function or, you know, I'm just going to write to the database and like, there's all this stuff that happens for me, um, in a way that is like most certainly better than anything I could ever pull up.

Um, so I think this is great. And I really, I like when, um, we have like, uh, you know, places like Fauna where, where the stories kind of sync up well together.

Right. And, and I think that's always a good sign of like, these things are meant to work well together.

Like there's a reason that these stories kind of sync up and they're a really good fit.

So yeah, I think this all makes a ton of sense.

And I think it's great. Yeah. And I mean, what, what you pointed out with the idea of a DBA tuning indexes and, uh, you know, how much memory should I allocate here and query planning and all this stuff?

What the end goal of that was all just performance, right?

It was make sure that the queries execute in a reasonable amount of time.

So the users have a good experience. And when you get that performance from the API itself, then you're it's, it's so many fewer things to worry about.

So it's, uh, that's, that's about half our time. What do you say we build for a little bit, go right into the, uh, sounds great.

Right into the, um, the quick start.

So let me, let me at least advance to my demo slide so that we can all see the word demo.

It's a very exciting slide. Very exciting. Yeah. Um, and if I get down here, I can even go back to where we were.

The link that you all saw on the slide is this tutorial.

We talked about it before this create a serverless globally distributed rest API with Fauna.

All the key bits are there in the title.

That's Fauna dot link slash workers. And then at the bottom, there's that link again.

Look, I can even use the nav on the side that takes you over here to this repo, which we're going to use.

Um, if you've used Wrangler and I assume most people watching us today probably use Wrangler since they're here for the updates.

Uh, this is a Wrangler template. We're going to Wrangler generate an app using this URL.

I have created an empty database just so that I had this pretty little Cloudflare TV here.

That's the only reason I did it. So you didn't see my mess of databases in the root there.

Uh, but other than that, I haven't done anything to prepare for this.

So we're going to go from this to deployed pretty quickly here. Um, we're going Wrangler generate.

We need a name and we need that URL, right? Uh, as a template argument.

So Fauna labs, Fauna workers. Does the template need an equals? We're going to find out shortly.

That's right. I was going to say, do you know more about Wrangler than I do?

I feel like you can just skip the flag actually. Oh, yeah.

Yeah. I think so. This is live coding is, is fine. It's in my history too, but y'all, I like to do it the hard way so that you can see how fast it goes.

Even with the mistakes, right?

I don't record my demos. I beat my head against the wall.

Headache driven development, Cloudflare, Cloudflare TV. Is that like if you do now that, oh yeah.

NPM install. Okay. We did it right. Yeah. Step one done.

We're doing it. Yeah. So, I mean, we'll open up Visual Studio code to look around in here.

That's exactly not the thing that I wanted you to see. That's not like, that's not a spill or anything, but it's just not the demo I wanted to show you all.

Do I trust the author of the files in this folder? Well, it was me. So absolutely not.

Um, but let's look, this is just another Wrangler app, right? We've got a that was configured.

We've got an index with some routes and WordTop. If you haven't used WordTop before, um, it's there.

But what we've given you here is a collection of resources that really give you a best practice set up with, um, least privileged access, very specific roles so that the keys that you generate only have exactly the access that they need and nothing more.

We've given you some user defined functions to encapsulate your logic.

And this allows you to unit test the business logic that's in your database, which by the way, still keeps you within those same performance parameters.

And it just gives you an approach that's scalable, but it gives you some examples that you can start from so that you can just start changing what's there rather than looking at a blank slate, right?

So if we go back to our terminal, uh, we can go straight to Wrangler publish, and this will upload our script.

As we know, it won't work from the sense of the entire API because we haven't given ourselves keys, created the resources and all that stuff yet.

Right. But we've published it so that we have access to put secrets.

We can make sure everything's good to go there and we get our hello funnel workers back.

Right. So we need a key. Let me take this off screen for a second because I know how you lot are, not you personally, Christian, but you know, like someone will screenshot and stuff.

Yeah, that's right. Give myself an admin key here because I'm going to create infrastructure.

Um, I'm also going to take this off the screen and sets them environment variables here.

Yeah. I think this is a thing you were kind of talking about with like the client drivers and stuff versus doing it in a worker is like you can use these keys and like we have an encrypted secret function in Wrangler.

So like you're not going to be like plain text, posting it into the, into the workers runtime or anything, but like you can use this model.

Like I have a secret key. I want to use it for my API requests, but your clients can still interact with that via this API that you're building out.

So, um, it's a much better way of doing it than just like having to install an NPM JS client in your react app or whatever.

And then worrying about like the keys there.

Um, so I always think that's like a, that's a nice thing about serverless, but in particular, I like the way that we've done it in workers, obviously I'm biased, but I just, I think that model makes sense to me as someone who has like literally published many a secret key into GitHub source in the past.

So I think that's great.

You know, I literally never spilled a secret into GitHub until I want to say it was last week.

And then I did it twice. Oh really? Like they were for databases that I had already deleted because we do infrastructure as code, of course, you know, we created it and pull it down.

But then, yeah, like I published two and I had to go back and check.

It's embarrassing, but we're going to use regular secret put here for our client key.

Um, but what I'm showing you now is what you would do in your CICD pipeline, separate from your application to create your database.

Right. So you don't want to be doing that on every deploy.

Um, I've set it as an environment variable here. All these instructions are in the quick start.

They're in the repo, just follow step by step.

Um, but we're going to run our own, um, infrastructure as code tool here since we've given it that key.

Now that's going to populate all those resources. Then we're going to go back and get that not so secret key that I don't really care if I expose to everybody here, we're going to put it into Cloudflare using regular secret put, and then our app will just magically start working.

Right. So we'll see that, um, again, these, this just shows us what we're going to create here.

We plan it.

We see that we'll have those roles that we talked about for least privileged access, the user defined functions for encapsulating our business logic and a collection to keep our, um, our documents in collections are like tables in a document or document relational database like we have.

So we'll apply that and boom, we're done.

And that's all it took. Everything in Fauna is a document.

Even your infrastructure inside Fauna is a document. So that sort of 41 millisecond target there is valid for creating, that was a global database with global tables and all of these things are there now.

Um, we're going to create a new key using one of those roles, the worker role.

And in this case, yes, we get a secret, get it while it's hot, go out there and hammer this database as much as you want for the next five minutes.

There's nothing in it, but you know, Wrangler secret put, and you know what, let me see, uh, I don't remember what I called it.

Fauna secret.

Look at that. It makes a lot of sense. Doesn't Wrangler secret put Fauna secret, paste the value and we're done.

And now at this point, we have access and I'm gonna go back to the read me here for a second to grab our, uh, our test cases, but we can create documents in here and see them show up just by hitting that API endpoint.

I'll tell you what, let me go over to the quick start itself. As if I were following along, we've got these creative product down here.

That's a lot easier to read than trying to copy that out of the markdown in it.

Just hit the copy button.

And that endpoint that we published to would be, what did we call it?

Uh, CloudflareTV.FaunaLabs .Workers.dev. Get rid of the port. Oh, rude.

We get that product ID back, which is what we wanted, right?

So we'd go over here and we see now that we've created, come on little buddy, come on.

We've created that document via the rest API.

And no matter where we query this from, we're going to be getting that updated.

And the other routes are there, the, uh, you know, the, we can retrieve the entire thing by grabbing this.

Um, let's see. It's, you know, it's a rest API, so it works the way you think it'll work.

Right. Then we get that, that data back that we just created down here.

So when you're doing that, And so that's, we started from nothing with this, right?

Yeah. So that's, that's calling like one of those functions that you deploy to like infrastructure's code that says like taking an ID and then sort of make the associated query.

Yeah. That's awesome.

That's super cool. Right. So this is encapsulated business logic, right? Which means we have the chance to do a lot of things here.

Uh, this is the simple version to start you off and we're shaping the return to show you how to shape that to match your domain objects, rather than just throwing a document back at you.

But you can do error handling in here.

You can do a back or attribute based access control inside here.

Basically you have this starting point and you can start iterating in the functionality that you need to get to extremely complex use cases, but following that principle of starting from a point of working and tested, changing one thing, changing your tests, reverse that order, do it until a test pass and move on to the next thing.

Right. Same for these other functions here for the other HTTP verbs.

Yeah. So, but you're, you're also not like kind of exposing like complete access to like your entire document store or collection, I guess.

Right. Like it's, it's like, this is a defined function that I also maintaining in the code and I'm just calling that function via the work.

Generally, I think that's like a really, uh, it feels like a very safe, um, but also like composable and, and, uh, like just a smart way to think about this versus like, uh, you know, I've written the kind of thing where it's like, Hey, like, here's my postgres query call.

I'm just going to like take the params and plug them in there.

Like, oh, I should remember to do like, what's the SQL injection thing.

I should Google that. I should look it up, whatever. Like this is, I like the way that this all kind of ties together.

It all lives in, uh, you know, in this project together.

And like when one thing changes, you can, you know, maybe you change the function or you add a new function, like the corresponding thing here inside of your workers function.

It just, it all makes a ton of sense. So I like the way that this is, um, yeah, that this is structured.

Yeah. And I mean, it, it also opens up for other platforms.

Like say you have embedded clients that won't go through Cloudflare for whatever reason, or say you have them running in a data center with their own private route.

You're still calling the same UDF. So you know, that you have that consistency across platforms, across languages, rather than trying to fit the same thing over and over into each pattern, every place that you go, you're just going to send a query that calls those user defined functions on another platform or even better just hit the API.

Right. And let that just keep the contract up, hold the contract.

And it doesn't matter how you implement that underneath.

Right. Yeah. And I imagine there's a lot of opportunity to kind of, looks like you guys have like a whole sort of authentication and like authorization thing here.

Like you can do things like, you know, with the way that workers works, you can look at what, like request headers are coming in and stuff like that.

And you can make decisions probably about like how to authenticate, you know, the people that are making requests to this function and give them like the corresponding roles and stuff like that, that they need.

So, um, it's, it's really, uh, yeah, probably much more, there's much more you can do here than like just in your JavaScript front end, uh, like Fauna driver client.

Right. It's, it's the same with a lot of, um, a lot of those kinds of driver patterns.

Like you can just do a lot more when you're like working in a backend context as opposed to a front end one.

Right. So. Yeah.

Yeah. That's right. I mean, together, the combination is an application security dream, right?

Yeah. You've got Cloudflares, DDoS and like hostile network protection before your requests even come in.

Then you've got workers for logic and routing to particular endpoints based on headers and other information that you provide the existence or absence of a key and things like that before you even get to the database.

And then inside the database, you have ABAC so that you can look at roles.

You can look at attributes of the document.

You can look at time of day. Right. So like a full-time employee can read this Monday through Friday, nine to five in their own time zone, but cannot update it outside of that.

Right. Yeah. And it's any attribute, any attribute can be used to approve or deny a call.

Um, so, and you can, and you can test all of these things and you can test them at the unit level and you can test them at the integration level.

So yeah, application security, people get in touch. We would love to help.

Hey, we are, believe it or not, we are less than a minute away from being done.

So let's, uh, give people a chance to, to see all the links again. I told you this would go by so quickly.

Um, so, so fauna.link slash workers that goes to the, uh, the new, uh, guide in our documentation, um, which Robin and his team have written.

Um, and then there's a bunch of other stuff here. Um, also go follow Rob.

You want to plug yourself on a, on a personal level? Yeah. I mean, if you love database memes like, uh, like that, then definitely follow me.

Um, but I do also try to generate useful content, uh, on a regular basis.

Uh, so yeah. Would be great to, would be great for the follow the, uh, the links top to bottom real quick tutorial, quick start and the fundamental paper.

So it's sort of an increasing level of difficulty.

If you want to follow those. Cool. Thanks for having me.

Thanks so much and, uh, enjoy the rest of the speaker series, everyone.